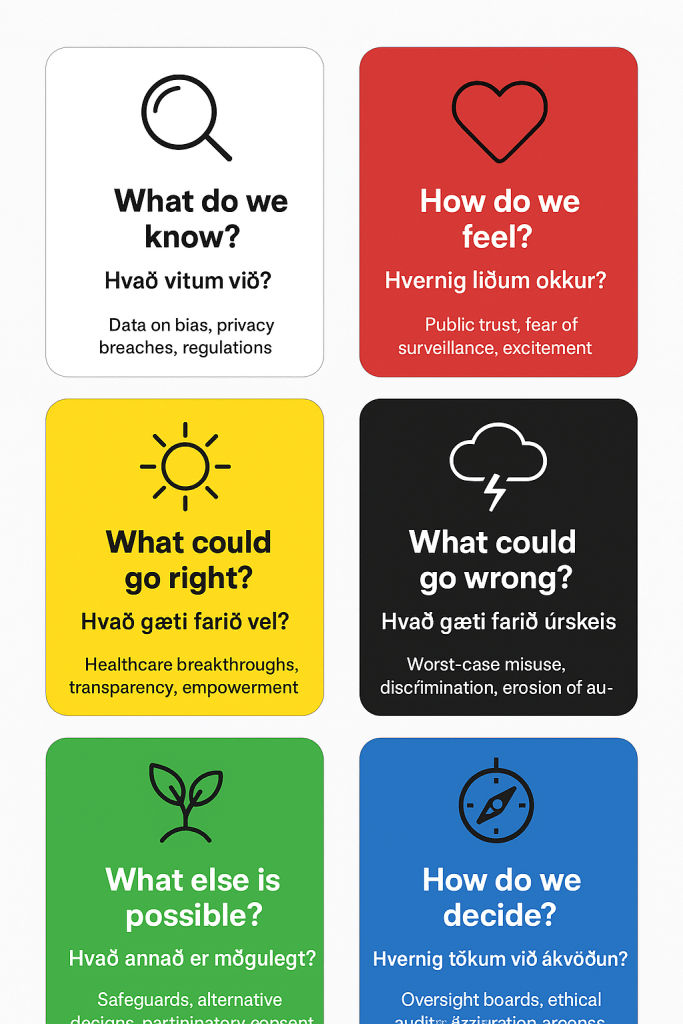

The Hats

Yes — the Six Thinking Hats framework works very well for exploring technology ethics. It helps teams examine ethical questions from multiple perspectives, ensuring balanced, thoughtful decisions rather than reactive or one-sided ones.

Here’s how each hat can be applied to tech ethics:

White Hat – Facts and Data

Gather factual information about the technology:

- What does the tech do?

- What data does it collect or process?

- What are the known ethical guidelines or regulations?

Red Hat – Emotions and Intuition

Acknowledge emotional and intuitive responses:

- How do people feel about this technology?

- Does it inspire trust or fear?

- What moral instincts are triggered?

Black Hat – Risks and Cautions

Recognize ethical risks and potential harm:

- It invade privacy or reinforce bias.

- What unintended consequences arise?

- Who be negatively affected?

Yellow Hat – Benefits and Positives

Highlight ethical opportunities and benefits:

- How can this technology improve lives?

- Can it promote fairness, accessibility, or sustainability?

- What positive social impact it have?

Green Hat – Creativity and Alternatives

Encourage innovative ethical solutions:

- How can we design this tech more responsibly?

- What choice models or safeguards can we create?

- How we turn ethical challenges into opportunities for innovation?

Blue Hat – Process and Oversight

Guide the ethical discussion and guarantee accountability:

- How will we track ethical compliance?

- Who is responsible for ongoing evaluation?

- What frameworks or principles will guide decisions?

(Optional) Purple Hat – Future Vision

Add a long-term ethical perspective:

- How this technology will shape society in 10–20 years?

- What values do we want to preserve or promote?

- How can we guarantee our innovations align with a sustainable, humane future?

Using the hats in this way helps leaders and teams move beyond compliance checklists. This approach leads to a deeper, more holistic ethical reflection. It integrates logic, emotion, creativity, and foresight.

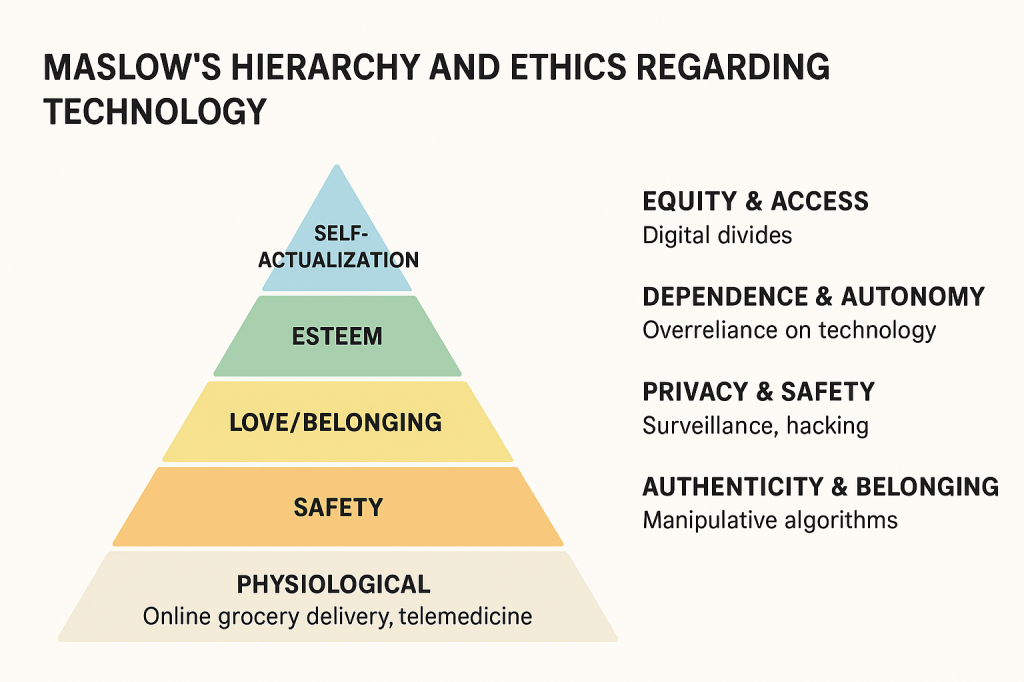

Here’s your visual pyramid integrating Maslow’s hierarchy of needs with ethical technology considerations.

It’s designed to show each layer of Maslow’s pyramid. The layers include Physiological, Safety, Love/Belonging, Esteem, and Self-Actualization. These appear alongside ethical overlays like equity, privacy, sustainability, and autonomy.

In short: Technology reshapes how Maslow’s hierarchy of needs is met. However, it also raises ethical questions about dependence, equity, privacy, and human dignity. Ethics in technology asks whether digital tools truly support human flourishing across all levels of the hierarchy or undermine them.

🧩 Maslow’s Hierarchy in the Digital Age

Maslow’s framework describes human motivation as layered needs, from basic survival to self-actualization. Technology now intersects with each level:

- Physiological needs: Online grocery delivery, telemedicine, and digital access to water/energy systems help meet basic survival needs. Yet reliance on fragile supply chains and digital platforms can create vulnerabilities.

- Safety needs: Smart homes, IoT security, and digital banking provide predictability and control. But cybersecurity threats, outages, and surveillance risks can destabilize this sense of safety.

- Love and belonging: Social media, messaging apps, and online communities fulfill connection needs. However, they also risk fostering isolation, misinformation, or manipulative design that exploits our social drives.

- Esteem: Digital platforms enable recognition (likes, followers, professional networks). Yet they can distort esteem into dependence on external validation, raising ethical concerns about mental health and manipulation.

- Self-actualization: Technology enables creativity, learning, and global collaboration. But it also raises questions about authenticity, autonomy, and whether algorithms limit or expand human potential LinkedIn.

⚖️ Ethical Dimensions of Technology

Technology ethics asks: Does digital innovation enhance or erode human dignity across Maslow’s hierarchy?

- Equity & Access: Who gets to meet their needs through technology? Digital divides mean some communities lack access to basic digital tools, creating inequality.

- Dependence & Autonomy: Over reliance on technology can undermine resilience. Ethical design should empower, not control, human agency.

- Privacy & Safety: Meeting safety needs digitally requires strong protections against surveillance, hacking, and misuse of personal data.

- Authenticity & Belonging: Platforms must balance connection with honesty, avoiding manipulative algorithms that exploit social needs.

- Sustainability: Ethical technology must consider ecological impacts, ensuring that meeting today’s needs doesn’t compromise future generations.

🌍 Integrating Ethics into Maslow’s Framework

Some scholars propose revising Maslow’s hierarchy for an AI-driven world. They suggest adding adaptability, digital literacy, and ethical awareness as foundational needs LinkedIn. This rephrasing suggests that in the 21st century, technology is not just a tool. It is a structural condition for meeting human needs.

✨ Takeaway

Technology can be seen as both a bridge and a barrier in Maslow’s hierarchy. Ethically, the challenge is ensuring that digital systems genuinely support human flourishing. They should help people feel safe, connected, valued, and capable of self-actualization. These systems must do this without exploiting vulnerabilities or deepening inequalities.

Presentation – Tech Ethics and Maslow’s Hierarchy by Guðbjörg Eggertsdóttir

Tech Ethics and Maslow’s Hierarchy of Needs – Ethical Focus

Maslow’s hierarchy of needs provides a useful lens for understanding how technology intersects with human well-being. When viewed through this framework, tech ethics is not just about compliance or innovation. It is about how technology supports fundamental human needs. It also questions whether technology undermines those needs.

1. Physiological Needs

At the base of the hierarchy are essentials like food, water, and shelter.

Ethical focus:

- Ensuring fair access to technology that supports survival — like clean water systems, agricultural tech, or health monitoring devices.

- Avoiding exploitation of vulnerable populations in the production of tech (e.g., unsafe labor conditions in supply chains).

2. Safety Needs

This level includes physical safety, cybersecurity, and data protection.

Ethical focus:

- Designing systems that protect users’ privacy and security.

- Preventing misuse of data, surveillance, or algorithmic bias that can harm individuals or communities.

- Building trustworthy AI and transparent governance around emerging technologies.

3. Love and Belonging

Technology deeply influences how people connect and form relationships.

Ethical focus:

- Encouraging authentic connection rather than addiction or polarization.

- Designing social platforms that foster empathy, inclusion, and meaningful interaction.

- Preventing online harassment and misinformation that erode trust and belonging.

4. Esteem Needs

People seek respect, recognition, and self-worth.

Ethical focus:

- Avoiding systems that manipulate self-esteem through comparison or validation metrics (e.g., likes, followers).

- Promoting digital literacy and empowerment so users feel capable and valued.

- Ensuring fair representation and accessibility for all identities and abilities.

5. Self-Actualization

At the top of the hierarchy is the pursuit of purpose, creativity, and personal growth.

Ethical focus:

- Designing technology that enhances human potential — learning platforms, creative tools, and well-being apps.

- Encouraging mindful use of tech to support reflection, compassion, and balance rather than distraction.

- Aligning innovation with human flourishing, not just profit or efficiency.

In essence:

Ethical technology should serve human development at every level of Maslow’s hierarchy — from survival to self-actualization. When tech design and policy follow this framework, innovation becomes a force for holistic wellbeing. It avoids exploitation or disconnection.

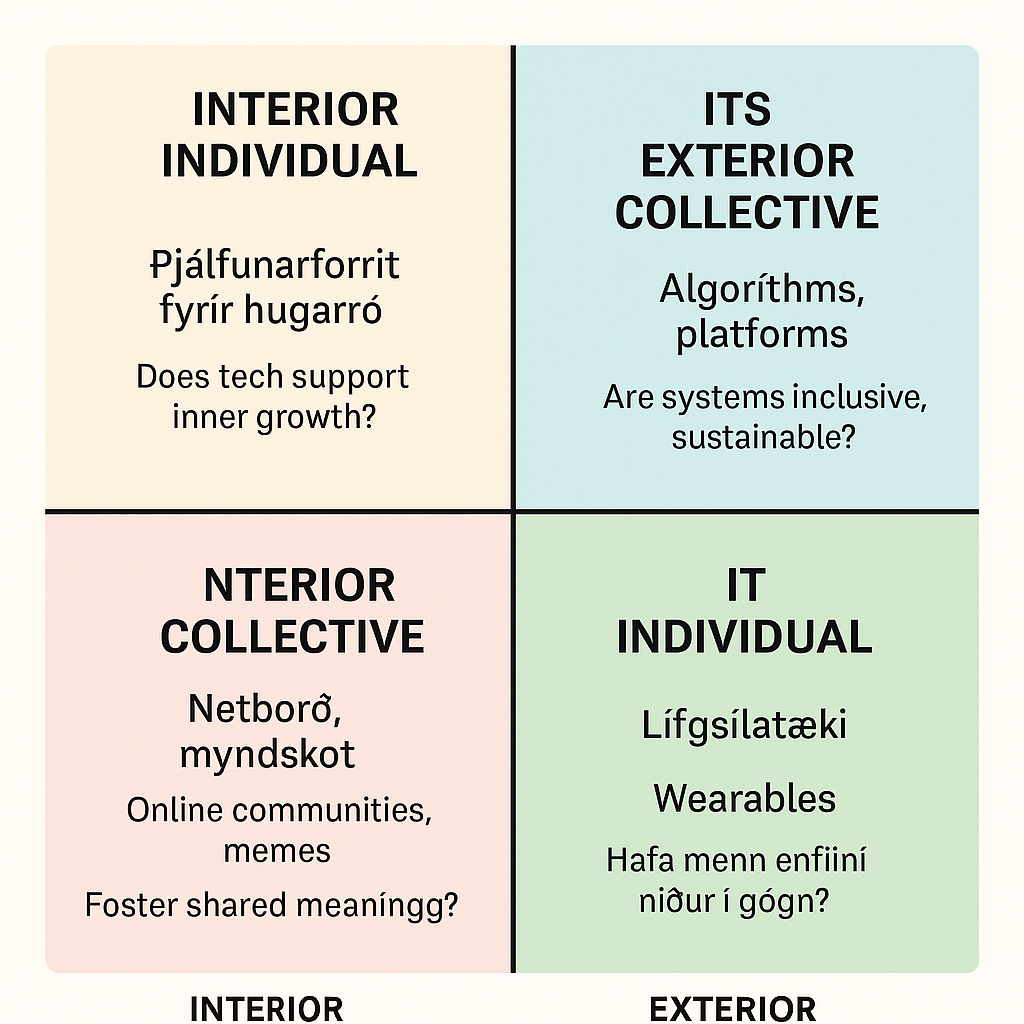

Here’s your bilingual AQAL visual map — it’s ready now.

It shows how ethical technology design can be mapped across all four AQAL quadrants. The quadrants include Icelandic and English labels. There are also examples and reflection prompts.

🌀 This pairs beautifully with the Maslow pyramid. Together, they invite participants to explore what needs are met. They examine how deeply and holistically technology supports human flourishing. You could use both visuals in a game-based format where teams design or critique tech tools through different quadrant lenses.

AQAL Ken Wilber

AQAL Integral Theory rephrases the ethics of technology by asking: Are we addressing all dimensions of human experience? Are we considering not just external systems, but also inner development? Do we contemplate cultural meaning and collective values? It expands Maslow’s hierarchy into a multidimensional map for ethical reflection and design.

🧭 What Is AQAL?

Ken Wilber’s AQAL model stands for All Quadrants, All Levels, All Lines, All States, All Types. It’s a meta-framework that integrates:

- Four Quadrants:• Interior Individual (I) – thoughts, emotions, intentions

- Exterior Individual (It) – behavior, biology, performance

- Interior Collective (We) – culture, shared meaning, values

- Exterior Collective (Its) – systems, structures, technology

- Levels: Developmental stages (egocentric, rational, pluralistic)

- Lines: Multiple intelligence (e.g., emotional, ethical, aesthetic)

- States: Temporary experiences (e.g., flow, trauma, meditation)

- Types: Personality, learning styles, cultural archetypes

🧠 AQAL’s Ethical Lens on Technology

Instead of asking only what needs are met, AQAL asks:

Which quadrant are we privileging—and which are we ignoring?

Quadrant Tech Example Ethical Reflection

I (Interior Individual) Mindfulness apps, AI therapy Does tech support inner growth or commodity it?

It (Exterior Individual) Wearable, biometric tracking. Are we reducing humans to data points?

We (Interior Collective) Online communities, memes. Are we fostering shared meaning or polarization?

Its (Exterior Collective) Algorithms, platforms, infrastructure. Are systems inclusive, sustainable, and transparent?

Key ethical insight: Technology often overemphasizes the “It” and “Its” quadrants—behavior and systems—while neglecting the inner and cultural dimensions that shape human dignity and motivation.

🔍 AQAL vs Maslow: A Comparison

Maslow AQAL

Linear hierarchy of needs Multidimensional map of human experience

Focus on motivation and fulfillment Focus on integration and perspective-taking

Useful for identifying unmet needs Useful for diagnosing blind spots in design and ethics.

Risks reductionist in tech ethics Encourages holistic, pluralistic ethics.

🌱 Integral Ethics in Practice

AQAL invites second-tier ethics—a pluralistic, developmental approach that:

- Honors multiple truths across cultures and perspectives

- Integrates inner and outer change (e.g., healing trauma and redesigning systems)

- Supports vertical development, not just horizontal access

- Encourages conscious design, where tech reflects values, not just efficiency

This means ethical tech isn’t just about access or safety—it’s about awakening, belonging, and transformation across all quadrants.

Sources: Ken Wilber Fund effective… +2.

Tech Ethics and Ken Wilber’s AQAL Model

Ken Wilber’s AQAL model (All Quadrants, All Levels, All Lines, All States, All Types) offers a holistic framework for understanding complex systems — including the ethical dimensions of technology. It helps us see how technology affects individuals, cultures, and systems simultaneously, and how ethical reflection must integrate all these perspectives.

1. All Quadrants: Four Perspectives on Tech Ethics

Ethical technology requires balance across all quadrants — not just technical compliance (UR) or moral intention (UL), but also cultural awareness (LL) and systemic accountability (LR).

2. All Levels: Stages of Ethical Maturity

Wilber’s model recognizes developmental stages — from egocentric to ethnocentric to worldcentric and beyond.

In tech ethics, this means evolving from:

- Profit-driven ethics → “What benefits my company?”

- Compliance ethics → “What keeps us legal?”

- Integral ethics → “What serves humanity and the planet?”

An integral approach encourages organizations to act from a worldcentric perspective. They even consider a kosmocentric viewpoint. This involves designing technology that uplifts all beings and ecosystems.

3. All Lines: Multiple Intelligence in Tech Ethics

Ethical decision-making in technology draws on several “lines” of development:

- Cognitive – understanding complex systems and consequences.

- Moral – discerning right action beyond self-interest.

- Emotional – empathy for users and affected communities.

- Spiritual – awareness of inter-connection and purpose.

A mature ethical culture in tech integrates all these intelligence.

4. All States: Awareness and Presence

States of consciousness — from reactive to mindful — influence ethical choices.

Mindful awareness in design and leadership helps prevent unconscious harm and fosters compassionate innovation.

5. All Types: Diversity and Inclusion

Different personality types, cultural backgrounds, and organizational archetypes bring varied ethical perspectives.

An integral approach values this diversity, ensuring that technology reflects multiple human experiences rather than a narrow worldview.

In essence:

Applying Wilber’s AQAL model to tech ethics invites a fully integral approach. This approach honors inner and outer dimensions. It also considers individual and collective realities, as well as multiple levels of human development. It moves the conversation from “Can we build it?” to “Should we build it — and for whose flourishing?”

Presentation – Tech Ethics and the AQAL Model by Guðbjörg Eggertsdóttir

System map with consequences

Yes — systems thinking is a powerful way to analyze tech ethics. It helps you see technology not as isolated tools. Instead, you view them as parts of interconnected social, economic, and environmental systems.

Using systems thinking, you can explore:

- Interconnections and feedback loops

- How technology influences human behavior, and how that behavior feeds back into further tech development.

- For example, social media algorithms shape attention patterns, which in turn affect what data is collected and how algorithms evolve.

- Multiple perspectives

- Ethical issues in tech often involve conflicting values — privacy vs. innovation, efficiency vs. fairness.

- Systems thinking encourages including diverse stakeholders (users, developers, policymakers, communities) to understand these tensions.

- Unintended consequences

- By mapping cause-and-effect relationships, you can predict ripple effects — like how automation will improve productivity but also widen inequality.

- Leverage points for ethical change

- Identifying where small interventions (like transparency standards or inclusive design practices) can shift the process toward more ethical outcomes.

- Dynamic evolution

- Ethics in tech isn’t static. Systems thinking helps track how norms and impacts evolve over time. Technologies and societies co-adapt.

In short, systems thinking moves ethical analysis beyond individual decisions or isolated technologies, toward understanding the broader patterns and structures that shape technological ethics as a living system.

Presentation – Life Evolving with Technology by Guðbjörg Eggertsdóttir

Cards

So glad you think so, Björg! 🌟 These cards are now ready to anchor a bilingual, metaphor-rich ethics dialogue. They are perfect for executive workshops, onboarding flows, or even creative retreats. You could pair them with:

- 🌀 A rotating board layout (circular or grid-based) to guide hat transitions

- 🎲 Game-based scoring or storytelling prompts under each hat

- 🧭 A “moral compass” metaphor to close the session, linking back to Wilber.

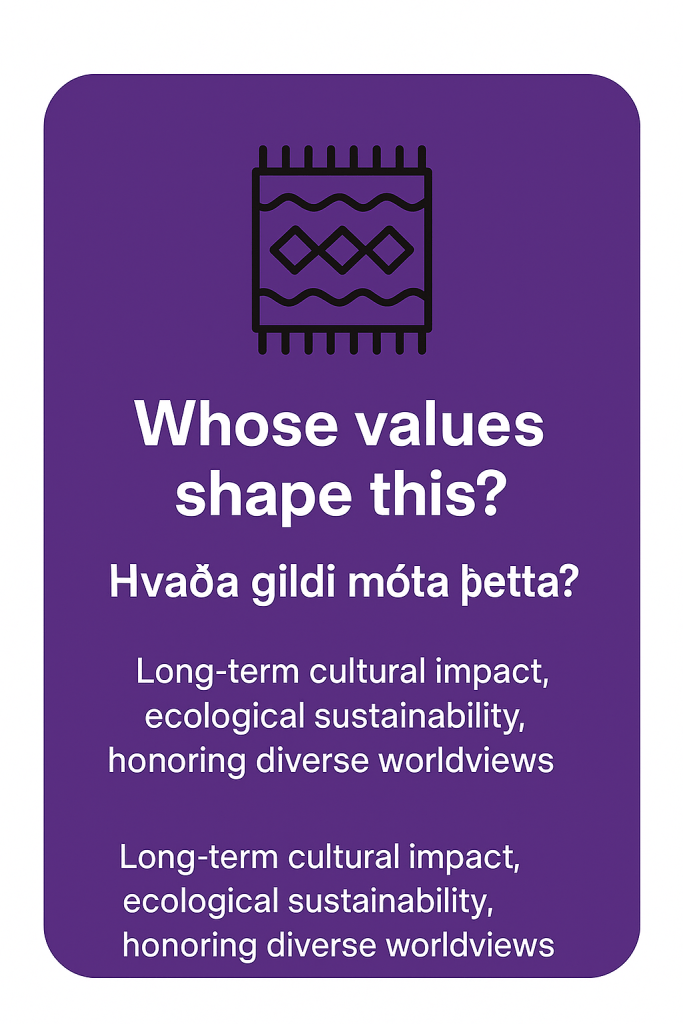

If I were to extend the Six Thinking Hats for tech ethics, I would add a Seventh Hat. I’d propose the Purple Hat 🟣 — Values & Legacy.

🟣 Purple Hat (Values & Legacy)

- Prompt: “Whose values shape this?” / “Hvaða gildi móta þetta?”

- Focus: Long-term cultural, ecological, and inter-generational impact.

- Metaphor: A tapestry or ancestral cloak — weaving together past wisdom and future responsibility.

- Tech Ethics Example:• How does this technology align with human dignity, ecological sustainability, or cultural preservation?

- What legacy will it leave for future generations?

- Does it honor diverse worldviews (local, indigenous, global)?

Why Add This Hat?

- The original six hats are powerful, but they don’t explicitly anchor values and long-term stewardship.

- In tech ethics, it’s not just about risks and benefits today — it’s about what world we’re shaping.

- This hat would resonate with your facilitation style, Björg. You often incorporate spiritual symbolism into workshops. You also weave in ecological awareness and cultural context.

Would you like me to design a Purple Hat card (with icon, bilingual prompt, and example) so it visually integrates with your existing set?

Here’s your Purple Hat card concept, fully integrated with the others 🎴🟣.

- Color & Icon: Deep purple background with a woven tapestry or ancestral cloak icon.

- Prompt (bilingual):• “Whose values shape this?”

- “Hvaða gildi móta þetta?”

- Focus: Values, legacy, inter-generational responsibility.

- Tech Ethics Example: Long-term cultural impact, ecological sustainability, honoring diverse worldviews.

This card acts as the seventh perspective. It ensures teams don’t just weigh risks and benefits. It also prompts them to ask: “What world are we leaving behind?”

You must be logged in to post a comment.